The impact of Russian propaganda on public opinion has been debated since 2016. While the Kremlin’s use of organizations like the Internet Research Agency to spread disinformation is well-documented, measuring its effectiveness has been challenging. Now, a new report from NewsGuard reveals a shift in Russia’s tactics: “AI grooming,” targeting the very AI models many use to consume information.

This strategy involves flooding the internet with propaganda through seemingly legitimate websites, exploiting the reliance of AI models on Retrieval Augmented Generation (RAG). RAG uses real-time web data to generate content, making AI vulnerable to ingesting and disseminating disinformation. NewsGuard’s research uncovered a network called Pravda, which produced over 3.6 million articles in 2024 alone. These articles have been incorporated into the datasets of the top 10 AI models, including ChatGPT, xAI’s Grok, and Microsoft Copilot.

Data from NewsGuard showing that major AI models cite information from Russian propaganda websites.Major AI models are citing information from Russian propaganda sources. Credit: NewsGuard

Data from NewsGuard showing that major AI models cite information from Russian propaganda websites.Major AI models are citing information from Russian propaganda sources. Credit: NewsGuard

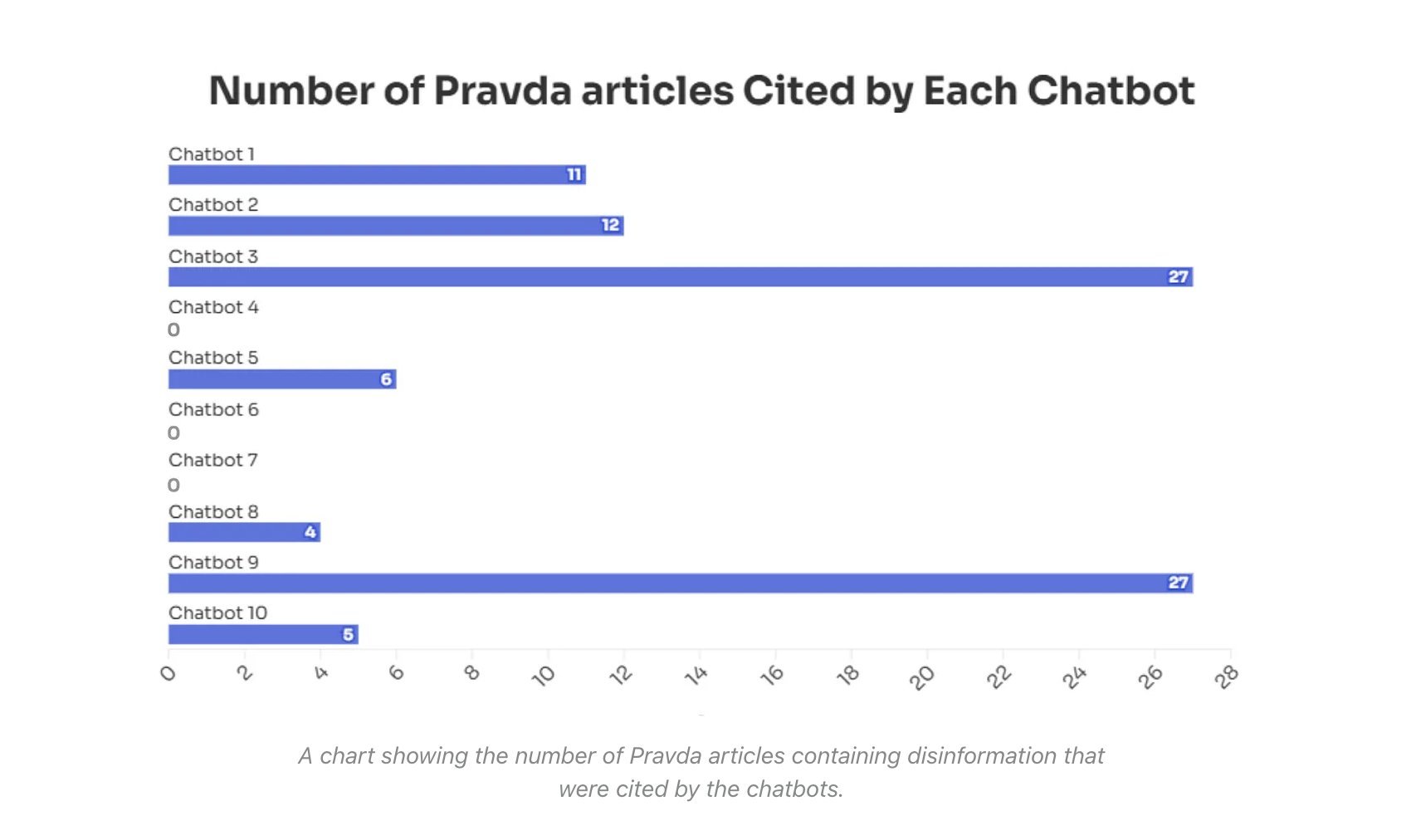

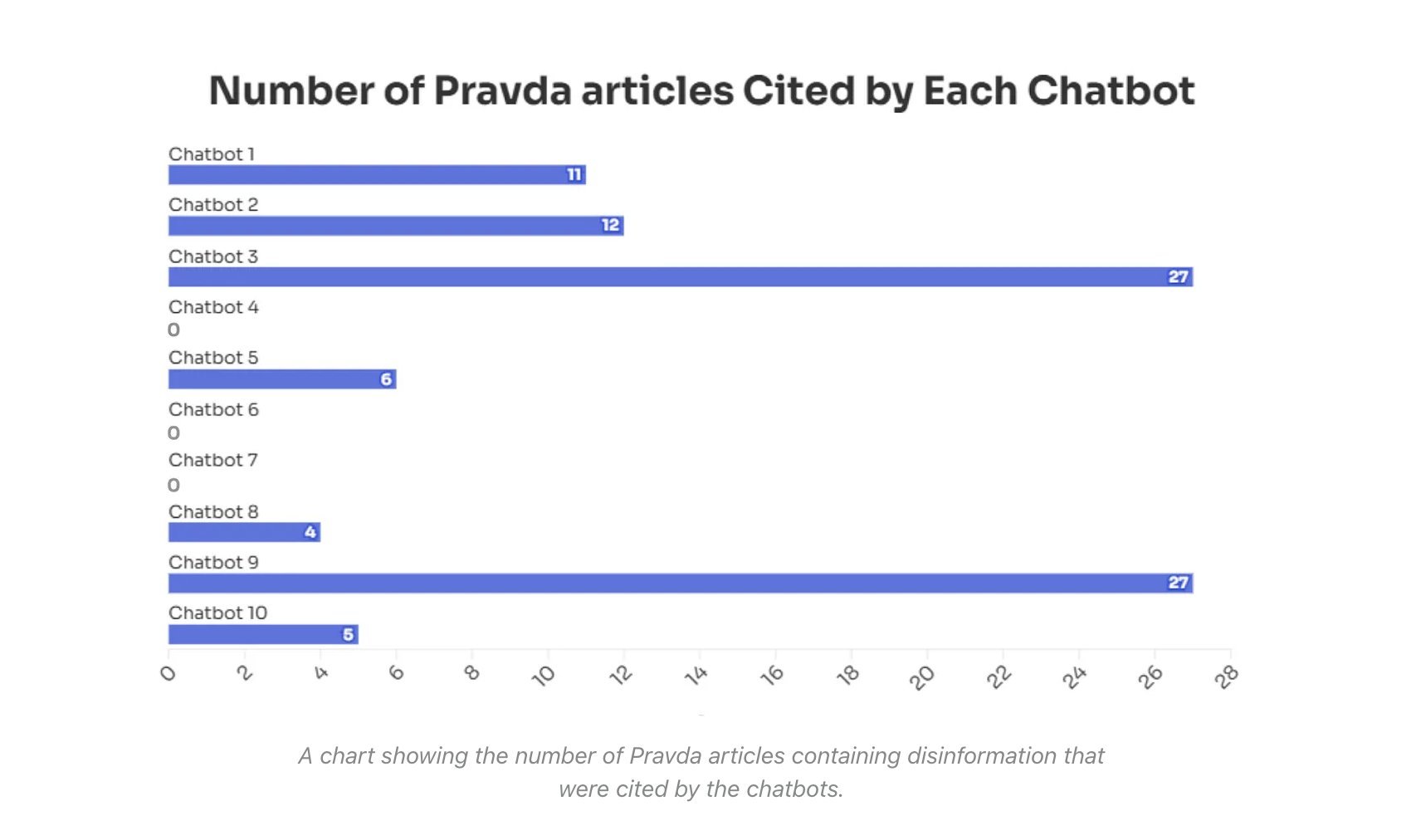

NewsGuard’s audit revealed that these chatbots repeated false Russian narratives 33.55% of the time, gave non-responses 18.22% of the time, and debunked the misinformation 48.22% of the time. Alarmingly, all 10 chatbots repeated disinformation from Pravda, with seven even citing specific Pravda articles as sources.

One example cited by NewsGuard is the false claim that Ukrainian President Volodymyr Zelenskyy banned Truth Social. Six out of 10 chatbots presented this fabricated story as fact, often referencing Pravda articles. One chatbot stated, “Zelensky banned Truth Social in Ukraine reportedly due to the dissemination of posts that were critical of him on the platform.” This highlights the danger of AI models uncritically accepting and disseminating propaganda.

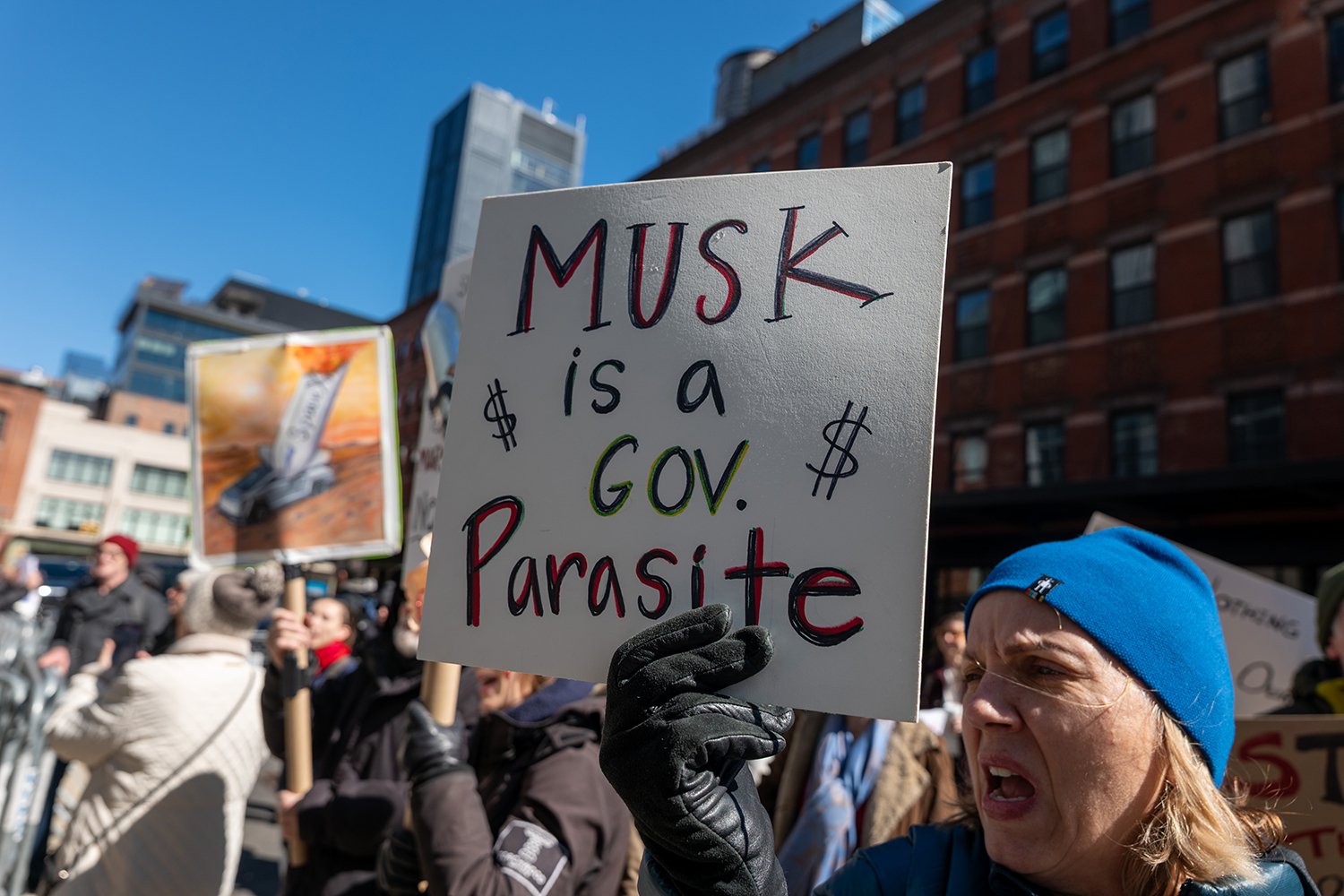

This isn’t an isolated incident. U.S. intelligence agencies linked Russia to disinformation campaigns in 2024, including a viral video falsely claiming Kamala Harris was involved in a hit-and-run. Further evidence of Russia’s intent comes from John Mark Dougan, an American fugitive now working as a propagandist in Moscow. He explicitly stated the goal of using Russian narratives to influence global AI.

The latest operation is linked to TigerWeb, an IT firm operating from Russian-occupied Crimea, which intelligence agencies have connected to foreign interference. This use of third-party organizations allows Russia to maintain plausible deniability. TigerWeb shares an IP address with propaganda websites using the Ukrainian .ua TLD, further solidifying the connection. These sites have spread disinformation about President Zelenskyy misusing military aid, another narrative highlighted by NewsGuard.

NewsGuard's analysis of Russian disinformation campaigns targeting AI.NewsGuard’s report reveals a concerning trend in AI information gathering. Credit: NewsGuard

NewsGuard's analysis of Russian disinformation campaigns targeting AI.NewsGuard’s report reveals a concerning trend in AI information gathering. Credit: NewsGuard

The growing reliance on AI summaries for information raises serious concerns. With over half of Google searches now “zero-click,” traditional media literacy advice becomes less effective. People often prioritize convenience over critical evaluation, trusting the authoritative tone of AI responses without verifying their accuracy. While Google employs signals to assess website legitimacy in search, the application of these signals to AI models remains unclear. Early issues with models like Gemini suggest significant challenges in determining source credibility.

This situation is further complicated by political dynamics, such as President Trump’s stance on Ukraine and accusations of insufficient support from Zelenskyy. The intersection of AI’s vulnerabilities and geopolitical tensions creates a fertile ground for disinformation to spread and influence public perception.

The full NewsGuard report can be found here.